Earlier this year, MacUpdater shut down after eight years. If you’re not familiar, it was a Mac utility that helped you track, manage, and install updates across all of your applications. It maintained a database of over 150,000 apps, scanned your Mac against it, and kept you aware of what needed attention. The team behind it had been selling one-year licenses, and after struggling to find a sustainable business model, they decided not to continue.

I’d been relying on it for years. My first instinct was to look for a replacement. That’s how it’s always worked. A tool goes away, you find the next one, you pay the subscription or the one-time fee, and you move on.

But this time I did something different. I built my own.

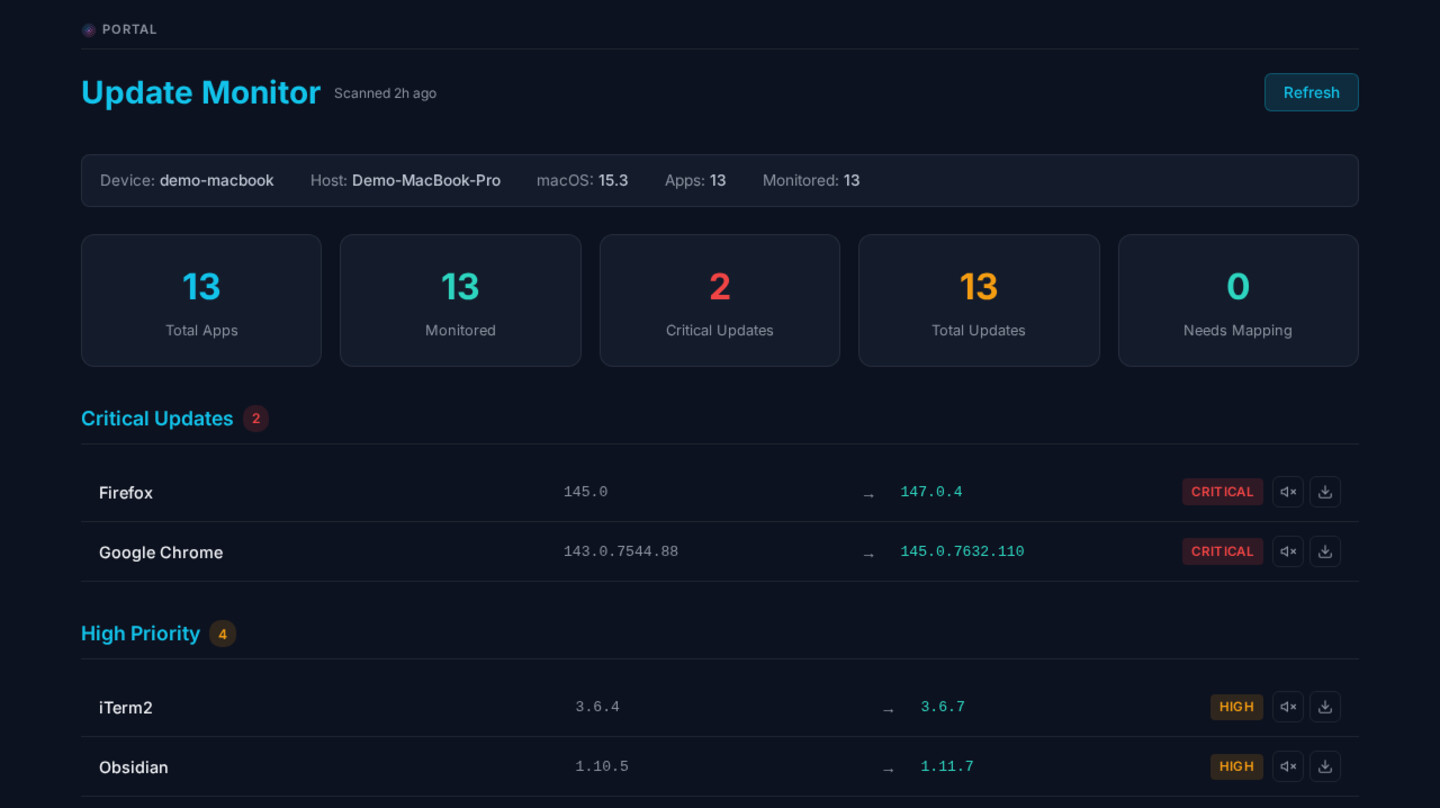

Update Monitor

Not as a product. Not to sell. Just for me. I built a small system I’ve been calling Update Monitor. There’s a lightweight component on each of my Macs that scans what’s installed and sends the catalog up to a central service. That service maintains a list of all the software sources my Macs report in on and checks for the latest versions. It classifies updates as low priority or critical based on my own priorities and known sensitivities of things like browsers and other internet-connected software. It tracks published security updates and known vulnerabilities. And it reports back to me when something needs my attention.

The whole thing took a few focused sessions with my AI assistant. Maybe an hour before we had something functional, then a few more sessions over the following days to refine it. And it actually works better for me than the commercial product did.

I can mark my own severity levels. I can hunt down sources for more obscure software that a commercial database might not cover well. The system is tuned to how I think about risk, not how a product thinks I should.

Even more than that, though. I appreciated MacUpdater, and I ultimately decided to trust it, but I never loved that I was scanning all of my apps daily and sending that data out to a company that was hard to find details about. That was the trade: they maintained the database and the Mac software, and in exchange I sent them a complete picture of everything installed on my machines.

With Update Monitor, I was able to shift that model. The scan on my computer is as lightweight as I can make it, keeping the footprint on my machines low. The data goes to my own service, which in my case runs as part of my AI assistant infrastructure . It didn’t have to be set up that way. It could have been a standalone service. I tie it in because the assistant helps me prioritize updates in the context of other things I’m working on, and it’s been useful for hunting down and maintaining the sources.

That was one of the components that made MacUpdater challenging to sustain. Their team maintained a config file with tens of thousands of app entries and a version database with millions of records. They wrote that the daily maintenance took more than a full day to perform, even with a trained team. My list is a lot smaller, and it’s just for me. With the assistant handling most of the source maintenance, I mostly just need to make sure the sources don’t change on me, and I even have it watching for that. Day to day, the scanning and reporting is deterministic.

For someone who thinks about trust and supply chain risks professionally, all of that adds up.

It’s Not Just One Tool

It’s interesting to step back and look at just how much custom software has found its way into my life and workflow, especially over the last few months. Dashboards for tracking projects and compliance. Monitors for services and system health. An assistant infrastructure with skills I’ve built for specific workflows. Media production tooling. Content pipelines. A personal podcast system that turns articles into audio. Gaming tools for friends. Security tools I run on my own network.

None of these are products. None of them are on the app store. They’re things I built because I needed them, and the AI tools made the building fast enough that it was worth doing.

This isn’t just me, either. Build or buy is in the news right now because investors are watching SaaS companies and wondering what it means for their growth when more people can build their own tools. Stock prices are reacting. There’s a big conversation about whether the economics of software subscriptions are shifting.

I think there’s some truth to that, but also likely some overcorrection. A colleague recently pointed out that a lot of what makes large SaaS products viable isn’t just the software itself. It’s the compliance certifications, the liability and insurance, the support infrastructure, the years of security hardening. Millions of companies are not going to stop using their existing tools. And for software that stores large amounts of sensitive data, like communication platforms, file sharing services, or anything customer-facing, the security requirements alone are significant. That’s very unlikely to totally go away.

But for a growing number of people and a growing range of use cases, the cost of building something custom has dropped to the point where it makes sense. Not for everything. But for more things than it used to.

The Shift

A year ago, building something custom meant either learning enough to write it yourself, which could take weeks or months or much longer depending on the project, or hiring someone, which can cost quite a bit of money. The time and expense were enough that buying a SaaS product was almost always the smarter choice, even if it didn’t fit perfectly.

But that’s changing. I’ve had similar experiences with clients recently. We’ve had projects that benefit from visualizing massive amounts of interconnected information, the kind of thing where we would have had to rely on sophisticated pre-built systems or worse, in some cases, just spreadsheets. Being able to build something custom let us take approaches that were far more effective. The features, the usability, the ability to shape the tool around the actual problem instead of the other way around. We built something in about three days that delivered more value than tools we’d been paying for and not getting half the return from.

Would it need to be maintained if it runs long term? Yes. But the initial investment was small enough, and the return immediate enough, that it shifts how you think about the decision.

There’s something else happening too. The software can adapt to you instead of you adapting to it. Especially in use cases like mine, where it’s a one-to-one relationship between me and the tool, that lowers a lot of the friction. I don’t have to learn a whole new system. I don’t have to deal with a major version update from a vendor that rearranges everything. I can make changes when I want to, at the pace I want to.

In a lot of these cases, the tools are more like reports than applications. You use them, and when the need changes, you build something new. The software is more ephemeral. In these cases, not every tool needs to be a product with a roadmap and a changelog.

Why I’m Not Releasing This as a Product

The thought has crossed my mind. Update Monitor works well. Other people might want something like it, especially now that MacUpdater is gone.

But the moment you start thinking about making something available to others, the requirements multiply. Take the update tracker as an example. Maintaining a version database for everyone’s applications, not just the ones on my machines, is a massive undertaking. It’s one of the reasons the MacUpdater team shut down. They couldn’t make the economics work, and the daily effort of maintaining that database was enormous.

And the database is only part of it. Then you need to maintain a client application that works across multiple versions of macOS, on different hardware. You need testing. You need support. You need a server that hosts the database, and with people connecting to that server, you need to protect and monitor that server, keep it updated, and maintain compatibility across all your client versions at the server level.

Then there’s the trust chain. If it’s just for me, I trust the sources I’m picking. I trust the code because I’m reviewing it as I go and testing it as I go. If I ship it to others, now they need to trust me. And I need to trust a wider set of sources, automations, and people. The responsibility grows in every direction.

It goes from being my tool to being a product. That’s a different game entirely. For a long time, we’ve just had to accept trust chains as they were because there was really only one way to get software: someone else built it and you decided whether to trust them. Now there’s another option, and that changes the equation.

Others Can Build Their Own

Here’s what interests me, though. I don’t need to release it as a product for other people to benefit from the idea. This article itself could be a starting point for someone’s assistant to help them build something like it. It won’t be identical to mine, and that’s fine. It’ll be tuned to their needs, their priorities, their threat model.

Maybe not everybody can do this at this moment. But more people can than could a few years ago. More people will be able to next month, next year. The barrier keeps dropping, and it seems to be dropping fast. Tools and projects that were only accessible to experienced developers are now within reach of people who can describe clearly what they want and think through how it should work. That seems to be the real factor right now. The building part has gotten dramatically easier. The harder part is being able to visualize what you need, describe it well, and keep it organized as you go.

There’s also a case for sharing, but maybe not in the way we’re used to. If a lot of people are independently having AI build roughly the same kind of tool, that does feel redundant. Repeated work, repeated compute, repeated time solving problems that have already been solved. But the sharing doesn’t have to look like maintained open source code. It could look more like sharing the specifications. Some markdown files, some diagrams, a clear description of what the system does and how it’s structured. Enough for someone else’s assistant to pick it up and build their own version. Repeatable systems that people can share and build on without anyone having to maintain a repository of code.

I think of it a bit like home improvements. You can build a custom shelf for your garage, and it works great because you know the space, the weight it needs to hold, and how you’re going to use it. Turning that into a product you sell at a hardware store is a completely different undertaking. But showing your neighbor the design so they can build their own version for their space? That’s easy, and everyone benefits.

The Pendulum

Now here’s where the cybersecurity part of my brain kicks in.

Custom software, even internal and personal software, still carries risk. Every tool I’ve built is code that runs on my systems. Code that processes data, makes network requests, and interacts with APIs. I trust it because I’ve been involved in building it, reviewing it, and testing it. But that’s me, and I do this for a living.

When you zoom out, the picture gets more interesting. More people building their own tools means a lot more code running on a lot more systems, with less formal review than we’d typically see from an established software vendor. And when any of that software ends up on the public internet, or gets distributed as a downloadable application, the stakes go up fast. You’re taking on responsibility for code running on other people’s computers, with all the vulnerability, stability, and maintenance concerns that come with it. Update servers to run. Supply chains to think about. These aren’t unsolvable problems. But they are problems, and they multiply quickly.

And it’s not like the existing world of software was perfect, either. People have always shipped flawed code. Plenty of applications have gone unreviewed due to understaffing, tight deadlines, or just not knowing where the problems were. Security incidents aren’t new. What seems new is the volume. There’s an exponential increase in software being built and deployed, with less formal review than before. The people doing it aren’t writing code in the traditional sense. They’re building. There’s code involved, but the relationship to it is different in many cases.

A lot of that software will work fine. Some of it will be deployed on internal networks and serve its purpose without issue. Some won’t. There will be incidents. There will be a correction. Best practices for responsibly building and maintaining personal software will emerge. Businesses may need one set of practices, individuals another, and insurance companies yet another.

And of course, we could be growing the most advanced form of technical debt we’ve ever experienced. Both at the small, personal level, where tools I built last month already need attention, and at the large, interconnected level, where millions of custom systems are quietly depending on APIs, models, and services that could change or disappear at any time.

I’m optimistic, though, that the security capabilities of our assistants and agents could grow alongside the building capabilities. The same tools that help build software can also help review it, audit it, and catch problems. They just need to be applied. If that keeps pace, and there’s reason to think it could, the pendulum could find an equilibrium faster than it otherwise would.

Where This Leaves Me

I’m going to keep building. The tools I’ve made for myself are useful, and the process of building them has been one of the more rewarding parts of working with AI, for me. The unit of software is getting smaller. The audience for a piece of software might be one person. That changes what building, sharing, and maintaining software looks like, and we’re still early in figuring out the norms for it.